Implicit bias refers to the beliefs and attitudes that affect our understanding, actions and decisions in an unconscious way.

Take-home Messages

- Implicit biases are unconscious attitudes and stereotypes that can manifest in the criminal justice system, workplace, school setting, and in the healthcare system.

- Implicit bias is also known as unconscious bias or implicit social cognition.

- There are many different examples of implicit biases, ranging from categories of race, gender, and sexuality.

- These biases often arise from trying to find patterns and navigate the overwhelming stimuli in this complicated world. Culture, media, and upbringing can also contribute to the development of such biases.

- Removing these biases is a challenge, especially because we often don’t even know they exist, but research reveals potential interventions and provides hope that levels of implicit biases in the United States are decreasing.

The term implicit bias was first coined in 1995 by psychologists Mahzarin Banaji and Anthony Greenwald, who argued that social behavior is largely influenced by unconscious associations and judgments (Greenwald & Banaji, 1995).

So, what is implicit bias?

Specifically, implicit bias refers to attitudes or stereotypes that affect our understanding, actions, and decisions in an unconscious way, making them difficult to control.

Since the mid-90s, psychologists have extensively researched implicit biases, revealing that, without even knowing it, we all possess our own implicit biases.

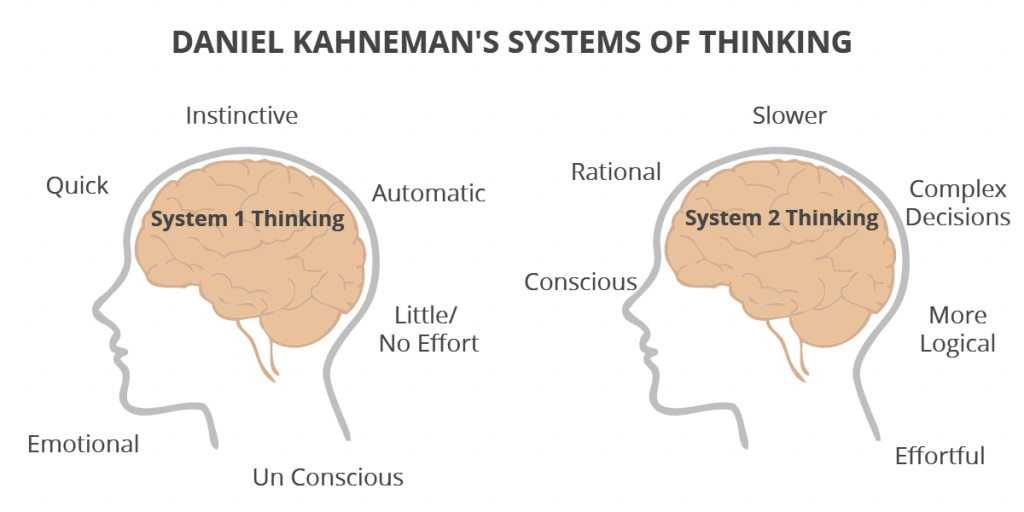

System 1 and System 2 Thinking

Kahneman (2011) distinguishes between two types of thinking: system 1 and system 2.

- System 1 is the brain’s fast, emotional, unconscious thinking mode. This type of thinking requires little effort, but it is often error-prone. Most everyday activities (like driving, talking, cleaning, etc.) heavily use the type 1 system.

- System 2 is slow, logical, effortful, conscious thought, where reason dominates.

Implicit Bias vs. Explicit Bias

| Implicit Bias | Explicit Bias | |

|---|---|---|

| Definition | Unconscious attitudes or stereotypes that affect our understanding, actions, and decisions. | Conscious beliefs and attitudes about a person or group. |

| How it manifests | Can influence decisions and behavior subconsciously. | Usually apparent in a person’s language and behavior. |

| Example | A hiring manager unknowingly favors candidates who went to the same university as them. | A person making a conscious decision not to hire someone based on their ethnicity. |

| Role in Discrimination | Can lead to unintentional discrimination and bias in many areas like hiring, law enforcement, healthcare, etc. | A person making a conscious decision not to hire someone based on ethnicity. |

| Measurement | Measured using implicit association tests and other indirect methods. | Can be assessed directly through surveys, interviews, etc. |

| Prevalence in Society | Very common, as everyone holds unconscious biases to some degree. | Less common, as societal norms have shifted to view explicit bias as unacceptable. |

| How to Avoid | Improve self-awareness, undergo bias training, diversify your experiences and interactions. | Education, awareness, promoting inclusivity and diversity. |

What is meant by implicit bias?

Implicit bias (unconscious bias) refers to attitudes and beliefs outside our conscious awareness and control. Implicit biases are an example of system one thinking, so we are unaware they exist (Greenwald & Krieger, 2006).

An implicit bias may counter a person’s conscious beliefs without realizing it. For example, it is possible to express explicit liking of a certain social group or approval of a certain action while simultaneously being biased against that group or action on an unconscious level.

Therefore, implicit and explicit biases might differ for the same person.

It is important to understand that implicit biases can become explicit biases. This occurs when you become consciously aware of your prejudices and beliefs. They surface in your mind, leading you to choose whether to act on or against them.

What is meant by explicit bias?

Explicit biases are biases we are aware of on a conscious level (for example, feeling threatened by another group and delivering hate speech as a result). They are an example of system 2 thinking.

It is also possible that your implicit and explicit biases differ from your neighbor, friend, or family member. Many factors can control how such biases are developed.

What Are the Implications of Unconscious Bias?

Implicit biases become evident in many different domains of society. On an interpersonal level, they can manifest in simply daily interactions.

This occurs when certain actions (or microaggressions) make others feel uncomfortable or aware of the specific prejudices you may hold against them.

Implicit Prejudice

Implicit prejudice is the automatic, unconscious attitudes or stereotypes that influence our understanding, actions, and decisions. Unlike explicit prejudice, which is consciously controlled, implicit prejudice can occur even in individuals who consciously reject prejudice and strive for impartiality.

Unconscious racial stereotypes are a major example of implicit prejudice. In other words, having an automatic preference for one race over another without being aware of this bias.

This bias can manifest in small interpersonal interactions and has broader implications in society’s legal system and many other important sectors.

Examples may include holding an implicit stereotype that associates Black individuals as violent. As a result, you may cross the street at night when you see a Black man walking in your direction without even realizing why you are crossing the street.

The action taken here is an example of a microaggression. A microaggression is a subtle, automatic, and often nonverbal that communicates hostile, derogatory, or negative prejudicial slights and insults toward any group (Pierce, 1970). Crossing the street communicates an implicit prejudice, even though you might not even be aware.

Another example of an implicit racial bias is if a Latino student is complimented by a teacher for speaking perfect English, but he is a native English speaker. Here, the teacher assumed that English would not be his first language simply because he is Latino.

Gender Stereotypes

Gender biases are another common form of implicit bias. Gender biases are the ways in which we judge men and women based on traditional feminine and masculine assigned traits.

For example, a greater assignment of fame to male than female names (Banaji & Greenwald, 1995) reveals a subconscious bias that holds men at a higher level than their female counterparts. Whether you voice the opinion that men are more famous than women is independent of this implicit gender bias.

Another common implicit gender bias regards women in STEM (science, technology, engineering, and mathematics).

In school, girls are more likely to be associated with language over math. In contrast, males are more likely to be associated with math over language (Steffens & Jelenec, 2011), revealing clear gender-related implicit biases that can ultimately go so far as to dictate future career paths.

Even if you outwardly say men and women are equally good at math, it is possible you subconsciously associate math more strongly with men without even being aware of this association.

Health Care

Healthcare is another setting where implicit biases are very present. Racial and ethnic minorities and women are subject to less accurate diagnoses, curtailed treatment options, less pain management, and worse clinical outcomes (Chapman, Kaatz, & Carnes, 2013).

Additionally, Black children are often not treated as children or given the same compassion or level of care provided for White children (Johnson et al., 2017).

It becomes evident that implicit biases infiltrate the most common sectors of society, making it all the more important to question how we can remove these biases.

LGBTQ+ Community Bias

Similar to implicit racial and gender biases, individuals may hold implicit biases against members of the LGBTQ+ community. Again, that does not necessarily mean that these opinions are voiced outwardly or even consciously recognized by the beholder, for that matter.

Rather, these biases are unconscious. A really simple example could be asking a female friend if she has a boyfriend, assuming her sexuality and that heterosexuality is the norm or default.

Instead, you could ask your friend if she is seeing someone in this specific situation. Several other forms of implicit biases fall into categories ranging from weight to ethnicity to ability that come into play in our everyday lives.

Legal System

Both law enforcement and the legal system shed light on implicit biases. An example of implicit bias functioning in law enforcement is the shooter bias – the tendency among the police to shoot Black civilians more often than White civilians, even when they are unarmed (Mekawi & Bresin, 2015).

This bias has been repeatedly tested in the laboratory setting, revealing an implicit bias against Black individuals. Blacks are also disproportionately arrested and given harsher sentences, and Black juveniles are tried as adults more often than their White peers.

Black boys are also seen as less childlike, less innocent, more culpable, more responsible for their actions, and as being more appropriate targets for police violence (Goff, 2014).

Together, these unconscious stereotypes, which are not rooted in truth, form an array of implicit biases that are extremely dangerous and utterly unjust.

Work

Implicit biases are also visible in the workplace. One experiment that tracked the success of White and Black job applicants found that stereotypically White received 50% more callbacks than stereotypically Black names, regardless of the industry or occupation (Bertrand & Mullainathan, 2004).

This reveals another form of implicit bias: the hiring bias – Anglicized‐named applicants receiving more favorable pre‐interview impressions than other ethnic‐named applicants (Watson, Appiah, & Thornton, 2011).

Causes

We’re susceptible to bias because of these tendencies:

We tend to seek out patterns

A key reason we develop such biases is that our brains have a natural tendency to look for patterns and associations to make sense of a very complicated world.

Research shows that even before kindergarten, children already use their group membership (e.g., racial group, gender group, age group, etc.) to guide inferences about psychological and behavioral traits.

At such a young age, they have already begun seeking patterns and recognizing what distinguishes them from other groups (Baron, Dunham, Banaji, & Carey, 2014).

And not only do children recognize what sets them apart from other groups, they believe “what is similar to me is good, and what is different from me is bad” (Cameron, Alvarez, Ruble, & Fuligni, 2001).

Children aren’t just noticing how similar or dissimilar they are to others; dissimilar people are actively disliked (Aboud, 1988).

Recognizing what sets you apart from others and then forming negative opinions about those outgroups (a social group with which an individual does not identify) contributes to the development of implicit biases.

We like to take shortcuts

Another explanation is that the development of these biases is a result of the brain’s tendency to try to simplify the world.

Mental shortcuts make it faster and easier for the brain to sort through all of the overwhelming data and stimuli we are met with every second of the day. And we take mental shortcuts all the time. Rules of thumb, educated guesses, and using “common sense” are all forms of mental shortcuts.

Implicit bias is a result of taking one of these cognitive shortcuts inaccurately (Rynders, 2019). As a result, we incorrectly rely on these unconscious stereotypes to provide guidance in a very complex world.

And especially when we are under high levels of stress, we are more likely to rely on these biases than to examine all of the relevant, surrounding information (Wigboldus, Sherman, Franzese, & Knippenberg, 2004).

Social and Cultural influences

Influences from media, culture, and your individual upbringing can also contribute to the rise of implicit associations that people form about the members of social outgroups. Media has become increasingly accessible, and while that has many benefits, it can also lead to implicit biases.

The way TV portrays individuals or the language journal articles use can ingrain specific biases in our minds.

For example, they can lead us to associate Black people with criminals or females as nurses or teachers. The way you are raised can also play a huge role. One research study found that parental racial attitudes can influence children’s implicit prejudice (Sinclair, Dunn, & Lowery, 2005).

And parents are not the only figures who can influence such attitudes. Siblings, the school setting, and the culture in which you grow up can also shape your explicit beliefs and implicit biases.

Implicit Attitude Test (IAT)

What sets implicit biases apart from other forms is that they are subconscious – we don’t know if we have them.

However, researchers have developed the Implicit Association Test (IAT) tool to help reveal such biases.

The Implicit Attitude Test (IAT) is a psychological assessment to measure an individual’s unconscious biases and associations. The test measures how quickly a person associates concepts or groups (such as race or gender) with positive or negative attributes, revealing biases that may not be consciously acknowledged.

The IAT requires participants to categorize negative and positive words together with either images or words (Greenwald, McGhee, & Schwartz, 1998).

Tests are taken online and must be performed as quickly as possible, the faster you categorize certain words or faces of a category, the stronger the bias you hold about that category.

For example, the Race IAT requires participants to categorize White faces and Black faces and negative and positive words. The relative speed of association of black faces with negative words is used as an indication of the level of anti-black bias.

Professor Brian Nosek and colleagues tested more than 700,000 subjects. They found that more than 70% of White subjects more easily associated White faces with positive words and Black faces with negative words, concluding that this was evidence of implicit racial bias (Nosek, Greenwald, & Banaji, 2007).

Outside of lab testing, it is very difficult to know if we do, in fact, possess these biases. The fact that they are so hard to detect is in the very nature of this form of bias, making them very dangerous in various real-world settings.

How to Reduce Implicit Bias

Because of the harmful nature of implicit biases, it is critical to examine how we can begin to remove them.

Meditation

Practicing mindfulness is one potential way, as it reduces the stress and cognitive load that otherwise leads to relying on such biases.

A 2016 study found that brief mediation decreased unconscious bias against black people and elderly people (Lueke & Gibson, 2016), providing initial insight into the usefulness of this approach and paving the way for future research on this intervention.

Adjust your perspective

Another method is perspective-taking – looking beyond your own point of view so that you can consider how someone else may think or feel about something.

Researcher Belinda Gutierrez implemented a videogame called “Fair Play,” in which players assume the role of a Black graduate student named Jamal Davis.

As Jamal, players experience subtle race bias while completing “quests” to obtain a science degree.

Gutierrez hypothesized that participants who were randomly assigned to play the game would have greater empathy for Jamal and lower implicit race bias than participants randomized to read narrative text (not perspective-taking) describing Jamal’s experience (Gutierrez, 2014), and her hypothesis was supported, illustrating the benefits of perspective taking in increasing empathy towards outgroup members.

Training

Specific implicit bias training has been incorporated in different educational and law enforcement settings. Research has found that diversity training to overcome biases against women in STEM improved with men (Jackson, Hillard, & Schneider, 2014).

Training programs designed to target and help overcome implicit biases may also be beneficial for police officers (Plant & Peruche, 2005), but there is not enough conclusive evidence to completely support this claim. One pitfall of such training is a potential rebound effect.

Actively trying to inhibit stereotyping actually results in the bias eventually increasing more so than if it had not been initially suppressed in the first place (Macrae, Bodenhausen, Milne, & Jetten, 1994). This is very similar to the white bear problem that is discussed in many psychology curricula.

This concept refers to the psychological process whereby deliberate attempts to suppress certain thoughts make them more likely to surface (Wegner & Schneider, 2003).

Education

Education is crucial. Understanding what implicit biases are, how they can arise how, and how to recognize them in yourself and others are all incredibly important in working towards overcoming such biases.

Learning about other cultures or outgroups and what language and behaviors may come off as offensive is critical as well. Education is a powerful tool that can extend beyond the classroom through books, media, and conversations.

On the bright side, implicit biases in the United States have been improving.

From 2007 to 2016, implicit biases have changed towards neutrality for sexual orientation, race, and skin-tone attitudes (Charlesworth & Banaji, 2019), demonstrating that it is possible to overcome these biases.

Books for further reading

As mentioned, education is extremely important. Here are a few places to get started in learning more about implicit biases:

- Biased: Uncovering the Hidden Prejudice That Shapes What We See Think and Do by Jennifer Eberhardt

- Blindspot by Anthony Greenwald and Mahzarin Banaji

- Implicit Racial Bias Across the Law by Justin Levinson and Robert Smith

Keywords and Terminology

To find materials on implicit bias and related topics, search databases and other tools using the following keywords:

| “implicit bias” | “implicit gender bias” |

| “unconscious bias” | “implicit prejudices” |

| “hidden bias” | “implicit racial bias” |

| “cognitive bias” | “Implicit Association Test” or IAT |

| “implicit association” | “implicit social cognition” |

| bias | prejudices |

| “prejudice psychological aspects” | stereotypes |

FAQs

Is unconscious bias the same as implicit bias?

Yes, unconscious bias is the same as implicit bias. Both terms refer to the biases we carry without awareness or conscious control, which can affect our attitudes and actions toward others.

In what ways can implicit bias impact our interactions with others?

Implicit bias can impact our interactions with others by unconsciously influencing our attitudes, behaviors, and decisions. This can lead to stereotyping, prejudice, and discrimination, even when we consciously believe in equality and fairness.

It can affect various domains of life, including workplace dynamics, healthcare provision, law enforcement, and everyday social interactions.

What are some implicit bias examples?

Some examples of implicit biases include assuming a woman is less competent than a man in a leadership role, associating certain ethnicities with criminal behavior, or believing that older people are not technologically savvy.

Other examples include perceiving individuals with disabilities as less capable or assuming that someone who is overweight is lazy or unmotivated.

References

Aboud, F. E. (1988). Children and prejudice. B. Blackwell.

Banaji, M. R., & Greenwald, A. G. (1995). Implicit gender stereotyping in judgments of fame. Journal of Personality and Social Psychology, 68 (2), 181.

Baron, A. S., Dunham, Y., Banaji, M., & Carey, S. (2014). Constraints on the acquisition of social category concepts. Journal of Cognition and Development, 15 (2), 238-268.

Bertrand, M., & Mullainathan, S. (2004). Are Emily and Greg more employable than Lakisha and Jamal? A field experiment on labor market discrimination. American economic review, 94 (4), 991-1013.

Cameron, J. A., Alvarez, J. M., Ruble, D. N., & Fuligni, A. J. (2001). Children’s lay theories about ingroups and outgroups: Reconceptualizing research on prejudice. Personality and Social Psychology Review, 5 (2), 118-128.

Chapman, E. N., Kaatz, A., & Carnes, M. (2013). Physicians and implicit bias: how doctors may unwittingly perpetuate health care disparities. Journal of general internal medicine, 28 (11), 1504-1510.

Charlesworth, T. E., & Banaji, M. R. (2019). Patterns of implicit and explicit attitudes: I. Long-term change and stability from 2007 to 2016. Psychological science, 30(2), 174-192.

Goff, P. A., Jackson, M. C., Di Leone, B. A. L., Culotta, C. M., & DiTomasso, N. A. (2014). The essence of innocence: consequences of dehumanizing Black children. Journal of personality and socialpsychology,106(4), 526.

Greenwald, A. G., & Banaji, M. R. (1995). Implicit social cognition: attitudes, self-esteem, and stereotypes. Psychological review, 102(1), 4.

Greenwald, A. G., McGhee, D. E., & Schwartz, J. L. (1998). Measuring individual differences in implicit cognition: the implicit association test. Journal of personality and social psychology, 74(6), 1464.

Greenwald, A. G., & Krieger, L. H. (2006). Implicit bias: Scientific foundations. California Law Review, 94 (4), 945-967.

Gutierrez, B., Kaatz, A., Chu, S., Ramirez, D., Samson-Samuel, C., & Carnes, M. (2014). “Fair Play”: a videogame designed to address implicit race bias through active perspective taking. Games for health journal, 3 (6), 371-378.

Jackson, S. M., Hillard, A. L., & Schneider, T. R. (2014). Using implicit bias training to improve attitudes toward women in STEM. Social Psychology of Education, 17 (3), 419-438.

Johnson, T. J., Winger, D. G., Hickey, R. W., Switzer, G. E., Miller, E., Nguyen, M. B., … & Hausmann, L. R. (2017). Comparison of physician implicit racial bias toward adults versus children. Academic pediatrics, 17 (2), 120-126.

Kahneman, D. (2011). Thinking, fast and slow. Macmillan.

Lueke, A., & Gibson, B. (2016). Brief mindfulness meditation reduces discrimination. Psychology of Consciousness: Theory, Research, and Practice, 3 (1), 34.

Macrae, C. N., Bodenhausen, G. V., Milne, A. B., & Jetten, J. (1994). Out of mind but back in sight: Stereotypes on the rebound. Journal of personality and social psychology, 67 (5), 808.

Mekawi, Y., & Bresin, K. (2015). Is the evidence from racial bias shooting task studies a smoking gun? Results from a meta-analysis. Journal of Experimental Social Psychology, 61, 120-130.

Nosek, B. A., Greenwald, A. G., & Banaji, M. R. (2007). The Implicit Association Test at age 7: A methodological and conceptual review. Automatic processes in social thinking and behavior, 4, 265-292.

Pierce, C. (1970). Offensive mechanisms. The black seventies, 265-282.

Plant, E. A., & Peruche, B. M. (2005). The consequences of race for police officers’ responses to criminal suspects. Psychological Science, 16 (3), 180-183.

Rynders, D. (2019). Battling Implicit Bias in the IDEA to Advocate for African American Students with Disabilities. Touro L. Rev., 35, 461.

Sinclair, S., Dunn, E., & Lowery, B. (2005). The relationship between parental racial attitudes and children’s implicit prejudice. Journal of Experimental Social Psychology, 41 (3), 283-289.

Steffens, M. C., & Jelenec, P. (2011). Separating implicit gender stereotypes regarding math and language: Implicit ability stereotypes are self-serving for boys and men, but not for girls and women. Sex Roles, 64(5-6), 324-335.

Watson, S., Appiah, O., & Thornton, C. G. (2011). The effect of name on pre‐interview impressions and occupational stereotypes: the case of black sales job applicants. Journal of Applied Social Psychology, 41 (10), 2405-2420.

Wegner, D. M., & Schneider, D. J. (2003). The white bear story. Psychological Inquiry, 14 (3-4), 326-329.

Wigboldus, D. H., Sherman, J. W., Franzese, H. L., & Knippenberg, A. V. (2004). Capacity and comprehension: Spontaneous stereotyping under cognitive load. Social Cognition, 22 (3), 292-309.

Further Information

Test Yourself for Bias

- Project Implicit (IAT Test) From Harvard University

- Implicit Association Test From the Social Psychology Network

- Test Yourself for Hidden Bias From Teaching Tolerance

Listen

- How The Concept Of Implicit Bias Came Into Being With Dr. Mahzarin Banaji, Harvard University. Author of Blindspot: hidden biases of good people5:28 minutes; includes a transcript

- Understanding Your Racial Biases With John Dovidio, Ph.D., Yale University

From the American Psychological Association11:09 minutes; includes a transcript - Talking Implicit Bias in Policing With Jack Glaser, Goldman School of Public Policy, University of California Berkeley21:59 minutes

- Implicit Bias: A Factor in Health Communication With Dr. Winston Wong, Kaiser Permanente19:58 minutes

- Bias, Black Lives and Academic Medicine Dr. David Ansell on Your Health Radio (August 1, 2015)21:42 minutes

Videos

- Uncovering Hidden Biases Google talk with Dr. Mahzarin Banaji, Harvard University

- Impact of Implicit Bias on the Justice System 9:14 minutes

- Students Speak Up: What Bias Means to Them 2:17 minutes

- Weight Bias in Health Care From Yale University16:56 minutes

- Gender and Racial Bias In Facial Recognition Technology 4:43 minutes

Journal Articles

- An implicit bias primer Mitchell, G. (2018). An implicit bias primer. Virginia Journal

of Social Policy & the Law, 25, 27–59. - Implicit Association Test at age 7: A methodological and conceptual review Nosek, B. A., Greenwald, A. G., & Banaji, M. R. (2007). The Implicit Association Test at age 7: A methodological and conceptual review. Automatic processes in social thinking and behavior, 4, 265-292.

- Implicit Racial/Ethnic Bias Among Health Care Professionals and Its Influence on Health Care Outcomes: A Systematic Review Hall, W. J., Chapman, M. V., Lee, K. M., Merino, Y. M., Thomas, T. W., Payne, B. K., … & Coyne-Beasley, T. (2015). Implicit racial/ethnic bias among health care professionals and its influence on health care outcomes: a systematic review. American Journal of public health, 105 (12), e60-e76.

- Reducing Racial Bias Among Health Care Providers: Lessons

from Social-Cognitive Psychology Burgess, D., Van Ryn, M., Dovidio, J., & Saha, S. (2007). Reducing racial bias among health care providers: lessons from social-cognitive psychology. Journal of general internal medicine, 22 (6), 882-887. - Integrating implicit bias into counselor education Boysen, G. A. (2010). Integrating Implicit Bias Into Counselor Education. Counselor Education & Supervision, 49 (4), 210–227.

- Cognitive Biases and Errors as Cause—and Journalistic Best Practices as Effect Christian, S. (2013). Cognitive Biases and Errors as Cause—and Journalistic Best Practices as Effect. Journal of Mass Media Ethics, 28 (3), 160–174.

- Empathy intervention to reduce implicit bias in pre-service teachers Whitford, D. K., & Emerson, A. M. (2019). Empathy Intervention to Reduce Implicit Bias in Pre-Service Teachers. Psychological Reports, 122 (2), 670–688.